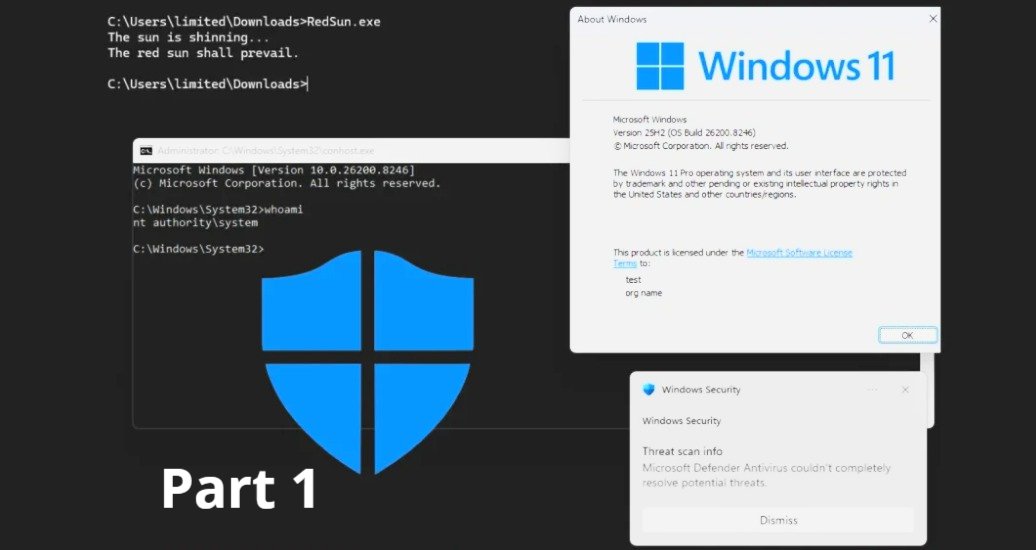

AI agents are quickly becoming “the new UI” for security operations: analysts ask a question, the agent investigates, and workflows kick off automatically. That speed is exactly why recent critical findings like the MS-Agent Shell tool vulnerability (CVE-2026-2256) should be treated as an enterprise wake-up call, not a niche developer issue. In the MS-Agent case, improper input sanitisation in a tool designed to run OS commands can allow arbitrary command execution and lead to full host compromise. (securityweek.com)

For security teams adopting conversational AI to bridge skills gaps, the lesson is clear: agentic assistance needs enterprise-grade controls—especially around tool execution, memory persistence, and real-time threat context—so that “helpful automation” doesn’t become a new privilege-escalation path.

Why AI agent hijacking is a different class of SOC risk

Traditional SOC automation (playbooks, scripts, SOAR) usually runs within well-defined inputs and guardrails. Agentic AI changes the boundary:

- Agents ingest untrusted content (alerts, email bodies, tickets, threat reports, chat messages).

- Agents can be connected to powerful tools (shell, connectors, SaaS APIs, cloud control planes).

- Agents may maintain persistent memory across sessions (preferences, “lessons learned,” investigation context).

That combination is exactly what makes “agent hijacking” so dangerous: the attacker doesn’t always need to exploit a network service—sometimes they just need to influence the agent’s inputs. Hence, the agent misuses its own tools.

In the MS-Agent example, the Shell tool’s blacklist-style filtering can be bypassed, enabling malicious command execution as part of normal agent flow. (securityweek.com)

The most common hijack paths in Sentinel-like environments

In security operations platforms (including Microsoft Sentinel-style workflows), agent hijacking typically shows up as one of these patterns:

Indirectly, the blast radius in real-world environments—such as long—term memory, external tool traces don’t freely contaminate the main agent's-term memory, and later triggers unsafe behaviour by prompting is stored in long-term memory and later triggers unsafe behaviour. In long—term memory, external tool traces don’t freely contaminate the main agent's-term memory and later triggers unsafe behaviour prompt injection via investigation data, such as the MS-Agent Shell tool vulnerability (CVE-2026-2256).

An attacker places instructions inside data the agent will read (alert details, logs, incident descriptions, a pasted “IOC list,” etc.). If the agent treats that content as “trusted instructions,” it may:

- Run unnecessary queries

- Disclose sensitive outputs

- Trigger risky workflows

Tool abuse and “over-permissioned” actions

If the agent can execute actions (close incidents, isolate endpoints, change firewall rules, run scripts), hijacking becomes materially impactful. The MS-Agent Shell tool issue shows why tool boundaries are where small validation mistakes become full compromise. (securityweek.com)

Persistent compromise via memory poisoning

A growing body of research and emerging guidance highlights memory poisoning: malicious content gets stored in long-term memory, then triggers unsafe behaviour later—across sessions. OWASP’s Agent Memory Guard project describes this as an attack on mutable, persistent agent state and proposes integrity checks, policy enforcement, and rollback. (owasp.org)

Research such as A-MemGuard and MemoryGraft further demonstrates how poisoned memory can create stealthy, durable behaviour drift that’s hard to detect with one-time audits. (arxiv.org)

Strategic defences: build layered controls, not “one prompt”

If your SOC is adopting conversational AI for triage and investigation, the goal isn’t to “write a safer system prompt.” The goal is to build an architecture where compromise is contained, actions are verifiable, and memory is governed.

Put tool execution behind hard isolation boundaries

Treat high-impact tools (Shell, PowerShell, Python runtimes, Azure/Graph admin APIs) like production admin access:

- Run tool execution in sandboxed environments

- Apply least privilege at the process identity level

- Prefer allowlists over regex denylists for commands and arguments (explicitly recommended in MS-Agent mitigation guidance) (securityweek.com)

If an agent must run commands, ensure the environment is disposable, monitored, and not holding secrets that can be easily read from disk.

Enforce “memory persistence checks” as a first-class control

If your agent keeps long-term memory (preferences, repeated steps, remediation patterns), treat memory as an attack surface with:

- Integrity baselines (hashing/signing)

- Policy controls on reads/writes

- Snapshotting + rollback to known-good states

This is aligned with the direction of OWASP Agent Memory Guard (integrity validation, anomaly detection, declarative policies, forensics, rollback). (owasp.org)

The security failure mode to avoid: an attacker injects one “helpful” looking instruction today that becomes an agent’s trusted procedure next week.

Separate “reasoning” from “doing” with gated, schema-validated outputs

A practical pattern is a two-step execution:

- The agent produces a structured action plan (what it wants to do and why).

- A policy layer validates it (permissions, scope, change window, ticket linkage, risk score).

- Only then do tools execute.

This aligns with modern isolation-by-design approaches, where external tool traces don’t freely contaminate the main agent context and only schema-validated outputs cross boundaries. (arxiv.org)

Make threat intelligence real-time and operational, not passive

Hijacks evolve. Your controls should adapt in near real time:

- Enrich investigations with up-to-date threat intel

- Automatically re-score severity when new intel arrives

- Detect “IOC-shaped” prompt injection patterns in inbound artifacts (for example, suspicious instructions embedded in what appears to be a log snippet)

Security operations AI should be proactive: not just answering questions, but continuously verifying whether the context has become hostile.

Use ticketing and change management as a security control (not just workflow)

SOC teams often view ticketing as “process overhead.” In agentic operations, ticketing is a guardrail:

- High-risk actions require a ticket

- Tickets capture the reasoning, approvals, and evidence trail

- Rollback steps are documented before the action

This is especially relevant for multi-tenant operations and MSSPs, where separation of duties and audit trails matter.

A control map you can operationalise quickly

If you’re using AI to reduce KQL dependency and accelerate triage, the platform must be designed to keep automation safe—even when inputs are hostile.

SecQube’s approach aligns well with this reality: AI-guided triage and investigation via Harvey, designed to help analysts of any skill level operate quickly and consistently, while keeping workflows structured. (secqube.com)

Just as importantly, SecQube emphasises operational foundations that help control blast radius in real environments—like multi-tenant security operations, built-in ticketing and change management, and Azure Lighthouse-based connectivity. (secqube.com)

If you’re building resilient AI-driven SOC operations, the key question to ask is:

Can my agent explain what it’s about to do, prove it’s allowed to do it, and recover safely if the context was manipulated?

Practical next steps for security leaders (30-day plan)

- Inventory every agent tool: identify anything equivalent to Shell, admin APIs, or “write” actions.

- Reduce privileges: apply least privilege identities per tenant/workspace and per tool.

- Add gated execution: require structured plans + policy approval for high-impact actions.

- Implement memory governance: define what can be stored, for how long, and how its integrity is checked.

- Operationalise threat intel: feed it into severity scoring and investigation guidance continuously.

- Run red-team simulations: test indirect prompt injection using realistic incident artifacts.

If your current agent can run commands, change configurations, or access secrets, treat it like a privileged admin endpoint—and defend it accordingly.

Building AI that stays helpful under attack

The MS-Agent vulnerability is a reminder that agentic systems don’t fail like traditional apps: they fail at the intersection of untrusted inputs, powerful tools, and persistent state. (securityweek.com)

Enterprise-grade defences aren’t about slowing automation down. They’re about making automation dependable: clear guardrails, resilient memory, and real-time threat context—so your AI assistant remains a force multiplier, even when adversaries try to turn it into an insider.

If you want to see how conversational AI can support Microsoft Sentinel operations while staying user-centric and structured, explore SecQube’s Harvey capability and platform approach. (secqube.com)

.svg)

.svg)