CVE-2026-2256 is a command injection vulnerability in Model Scope’s MS-Agent framework that can let attackers hijack an AI agent’s workflow and drive arbitrary operating system command execution in the agent’s runtime context. The core issue: MS-Agent’s Shell tool executes commands in a way that can be influenced by attacker-controlled input, and its “safety checks” rely on patterns that are not robust against real shell parsing. (kb.cert.org)

As AI agents become more common in enterprise security operations (from incident enrichment to automated response playbooks), this vulnerability is a timely reminder that “agentic automation” expands the attack surface in a very specific way: it turns untrusted content (emails, tickets, logs, documents, threat intel feeds) into potential control channels. (securityweek.com)

What’s vulnerable and who is affected

MS-Agent is an open-source framework for building AI agents that can call tools (including a Shell tool for OS command execution). CVE-2026-2256 affects Model Scope’s ms-agent versions v1.6.0rc1 and earlier per NVD, with public technical writeups frequently referencing 1.5.2 as the version used for analysis and reproduction. (nvd.nist.gov)

This becomes especially risky in deployments where:

- The agent can execute shell commands on the host (directly or via a tool wrapper).

- The agent ingests data from semi-trusted or untrusted sources (web pages, tickets, Slack/Teams messages, emails, PDFs, shared docs, log messages).

- The agent runs with broad filesystem/network permissions (typical in fast-moving PoCs that later drift into production).

Even if you never intended to “give users shell access,” agent toolchains can create indirect shell access when prompts and data sources can steer tool selection and tool inputs. (securityweek.com)

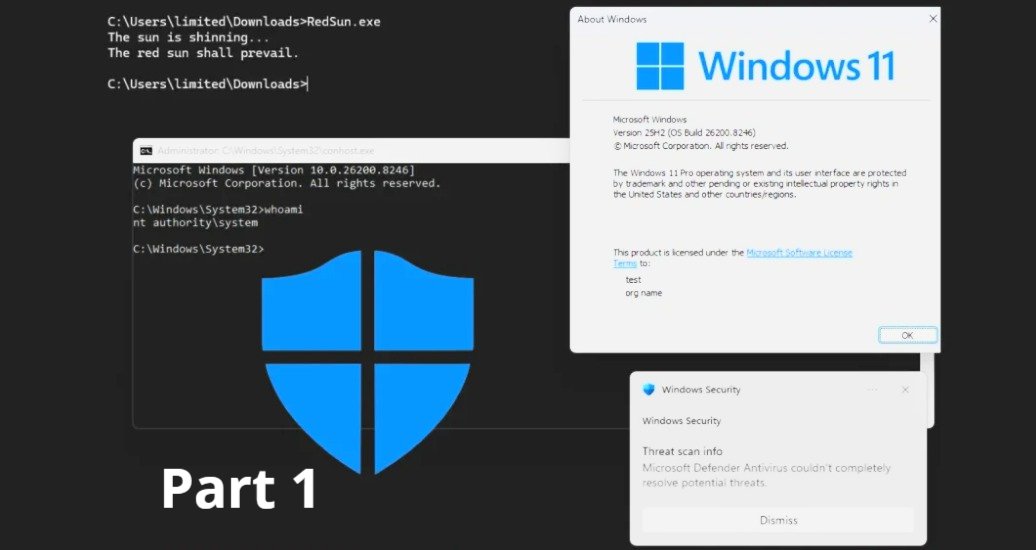

How the exploit works (mechanics, not weaponisation, command-execution tools within an agent framework must be treated as)

At a high level, the exploitation chain looks like this:

- Initial influence: An attacker places crafted instructions or payload-like strings into content the agent will consume (for example: a “research” source, a document, a log entry, or a ticket description). (securityweek.com)

- Tool steering: The content is written to nudge the agent into choosing the Shell tool as a “helpful” step (for example, “run this command to verify…”). (medium.com)

- Validation bypass: MS-Agent attempts to filter dangerous commands using a deny-list/regex approach, but shell metacharacters, quoting tricks, and interpreter-based execution paths can bypass intent-based filters because the shell interprets the final command string differently than the validator expects. (medium.com)

- Execution: The command runs with the privileges of the MS-Agent process, enabling actions like reading secrets, modifying files, or pivoting to internal services (depending on host hardening). (securityweek.com)

A key point from the public PoC discussion is that blocklists tend to miss “allowed” programs (like scripting interpreters) that can still execute arbitrary logic, which undermines the idea that filtering a few “dangerous” tokens is sufficient. (github.com)

Why this is a bigger deal in security operations than in a typical dev agent

Security teams are actively adopting AI assistants and agents to reduce alert fatigue and speed up triage. But SecOps environments are uniquely sensitive for three reasons:

- High-value context is everywhere: SIEM incidents, investigation notes, API tokens, automation runbooks, connector configs, and cloud credentials tend to co-exist in the same operational plane.

- Content is inherently adversarial: Attackers can often influence logs, alerts, email bodies, ticket text, and even “benign-looking” artifacts that analysts open during investigations.

- Automation has teeth: The more you automate (enrichment, containment, remediation), the more valuable it becomes to hijack the automation layer rather than the analyst workstation.

This is why command execution tools inside an agent framework must be treated like production RCE surfaces, not “helper utilities.”

Affected deployments: where this is most likely to show up

The highest-risk patterns we’re seeing in enterprises and MSSPs include:

- Deep research / autonomous exploration agents that crawl the web and summarise findings, then “verify” by running local commands.

- SOC copilot-style integrations that pull in tickets, email content, incident comments, and run ad-hoc tooling steps.

- MCP/tool-calling setups where third-party tools are added quickly, without a uniform security policy for tool permissions and inputs.

MS-Agent is designed to support tool-calling workflows; when those workflows include shell execution on the host, the framework effectively becomes a command execution gateway that can be steered by prompt-derived content. (securityweek.com)

Vendor response and disclosure timeline (what we know as of March 4, 2026)

From the CERT/CC vulnerability note (VU#431821), the coordinator states that no vendor statement was provided during coordination efforts, and lists Model Scope’s status as “Unknown.” CERT/CC also documents that Model Scope was notified on January 15, 2026, and the issue was made public on March 2, 2026. (kb.cert.org)

NVD lists the CVE as “Awaiting Analysis”, but includes a CISA-ADP CVSS v3.1 base score of 6.5 (Medium) and confirms affected versions as v1.6.0rc1 and earlier. (nvd.nist.gov)

Severity scores can lag real-world impact for agentic vulnerabilities, because the “attack complexity” and “scope” depend heavily on how the agent is wired into enterprise workflows (data sources, permissions, sandboxing, and network egress). (nvd.nist.gov)

Practical impact: what “remote hijacking” can realistically mean

If exploited in a production-like environment, the blast radius can include:

- Credential exposure: API keys, tokens, connector secrets, and configuration files readable by the agent process. (securityweek.com)

- Operational integrity loss: Modified artifacts, tampered investigation outputs, or poisoned reports that later drive analyst decisions.

- Persistence and pivoting: Dropping files, scheduling tasks, or reaching internal services—especially if the agent runs with broad outbound network access. (securityweek.com)

Quick risk mapping table

CERT/CC’s recommended mitigations focus on trusting inputs, sandboxing shell-capable agents, least privilege, and replacing deny-list filtering with allow-list filtering. (kb.cert.org)

Here’s how to translate that into practical engineering controls:

Remove or isolate shell execution

- Disable the Shell tool where possible.

- If you can’t, run it in a separate sandbox boundary (container/VM/job runner) with:

- read-only filesystem mounts where feasible,

- no access to secrets by default,

- restricted network egress.

Treat all ingested content as untrusted

- Strip/escape potentially executable patterns before agent reasoning (not just before tool execution).

- Add content provenance: “Where did this text come from?” and “Is it authenticated?”

Replace blocklists with policy

- Don’t attempt to enumerate “bad commands.”

- Use explicit allow lists (per task) and constrain arguments to safe forms.

Add detective controls for agent tool calls

- Log every tool invocation with:

- input, output, and caller context (incident ID, tenant, user),

- an immutable audit trail,

- alerts on anomalous tool usage bursts or unexpected interpreters.

What this means for Microsoft Sentinel-driven SOCs (and how SecQube reduces the blast radius)

If you’re adopting AI to accelerate Microsoft Sentinel operations, the safest path is to design automation so it’s powerful for triage but constrained for execution.

SecQube’s approach aligns with that principle by emphasising user-centric simplicity without turning your SOC assistant into an over-privileged OS operator:

- Conversational triage without KQL expertise: Harvey helps analysts move faster without requiring ad-hoc shell execution on analyst hosts. (Harvey)

- Built-in ticketing and notifications designed to limit data exposure: ticket context stays in the portal, and notification emails can avoid including sensitive security payloads. (Ticketing and notifications)

- Portal-first workflows for multi-tenant operations: for MSSPs/MSPs, centralisation reduces the temptation to bolt on risky “agent runs on a jump box” patterns just to scale. (SecQube platform overview)

- Read-only posture (where appropriate): SecQube’s public FAQ highlights read-only permissions for Harvey in customer subscriptions, which is a strong baseline when paired with automated investigation. (secqube.com)

In other words: you can get the benefits of AI-guided incident investigation while keeping “execution” tightly controlled and auditable—especially important as prompt injection and tool hijacking threats rise alongside agent adoption.

Key takeaways for security leaders

- CVE-2026-2256 is not “just another command injection.” It’s an example of a newer failure mode: indirect prompt-to-tool-to-shell compromise, where untrusted text steers trusted automation. (medium.com)

- If you run AI agents in your SOC (or plan to), assume attackers will target tool permissions, tool selection, and tool inputs—not only model outputs.

- The fastest risk reduction comes from removing shell execution, enforcing least privilege, and adding strong isolation boundaries around any tool that can touch the OS. (kb.cert.org)

If you want, share how you’re currently running agents (where they run, what tools they can call, and what data sources they ingest), and I’ll map CVE-2026-2256-style exposure paths and a concrete hardening checklist for your environment.

.svg)

.svg)